# Prepare data for plotting

plot_data <- pixar_combined %>%

filter(!is.na(rotten_tomatoes) | !is.na(metacritic) | !is.na(critics_choice)) %>%

select(film, year, franchise, rotten_tomatoes, metacritic, critics_choice) %>%

mutate(

# Label only outliers and top performers

label = case_when(

franchise == "Cars" ~ film,

film %in% c("Toy Story", "Toy Story 2", "WALL-E", "Ratatouille",

"Finding Nemo", "Inside Out") ~ film,

TRUE ~ ""

),

# Color by franchise

franchise_color = case_when(

franchise == "Cars" ~ "Cars",

franchise == "Toy Story" ~ "Toy Story",

franchise %in% c("Finding Nemo/Dory", "The Incredibles") ~ "Other Franchise",

TRUE ~ "Standalone"

)

)

# Create three scatter plots

p1 <- plot_data %>%

ggplot(aes(x = rotten_tomatoes, y = metacritic)) +

geom_abline(intercept = 0, slope = 1, linetype = "dashed",

color = "gray50", linewidth = 0.5, alpha = 0.6) +

geom_point(aes(color = franchise_color), size = 3, alpha = 0.8) +

geom_text_repel(aes(label = label, color = franchise_color),

size = 3, max.overlaps = 20,

box.padding = 0.5, force = 2) +

scale_color_manual(

values = c(

"Cars" = "#DC143C", # Crimson red for underperformers

"Toy Story" = "#4169E1", # Royal blue for top franchise

"Other Franchise" = "#32CD32", # Lime green

"Standalone" = "#808080" # Gray

)

) +

labs(

x = "Rotten Tomatoes Score",

y = "Metacritic Score",

color = NULL

) +

theme_minimal(base_size = 11) +

theme(

legend.position = "none",

panel.grid.minor = element_blank(),

plot.margin = margin(10, 10, 10, 10)

)

p2 <- plot_data %>%

ggplot(aes(x = rotten_tomatoes, y = critics_choice)) +

geom_abline(intercept = 0, slope = 1, linetype = "dashed",

color = "gray50", linewidth = 0.5, alpha = 0.6) +

geom_point(aes(color = franchise_color), size = 3, alpha = 0.8) +

geom_text_repel(aes(label = label, color = franchise_color),

size = 3, max.overlaps = 20,

box.padding = 0.5, force = 2) +

scale_color_manual(

values = c(

"Cars" = "#DC143C",

"Toy Story" = "#4169E1",

"Other Franchise" = "#32CD32",

"Standalone" = "#808080"

)

) +

labs(

x = "Rotten Tomatoes Score",

y = "Critics' Choice Score",

color = NULL

) +

theme_minimal(base_size = 11) +

theme(

legend.position = "none",

panel.grid.minor = element_blank(),

plot.margin = margin(10, 10, 10, 10)

)

p3 <- plot_data %>%

ggplot(aes(x = metacritic, y = critics_choice)) +

geom_abline(intercept = 0, slope = 1, linetype = "dashed",

color = "gray50", linewidth = 0.5, alpha = 0.6) +

geom_point(aes(color = franchise_color), size = 3, alpha = 0.8) +

geom_text_repel(aes(label = label, color = franchise_color),

size = 3, max.overlaps = 20,

box.padding = 0.5, force = 2) +

scale_color_manual(

values = c(

"Cars" = "#DC143C",

"Toy Story" = "#4169E1",

"Other Franchise" = "#32CD32",

"Standalone" = "#808080"

),

name = NULL

) +

labs(

x = "Metacritic Score",

y = "Critics' Choice Score",

color = NULL

) +

theme_minimal(base_size = 11) +

theme(

legend.position = "bottom",

panel.grid.minor = element_blank(),

plot.margin = margin(10, 10, 10, 10)

)

# Combine with patchwork

combined_plot <- (p1 | p2 | p3) +

plot_annotation(

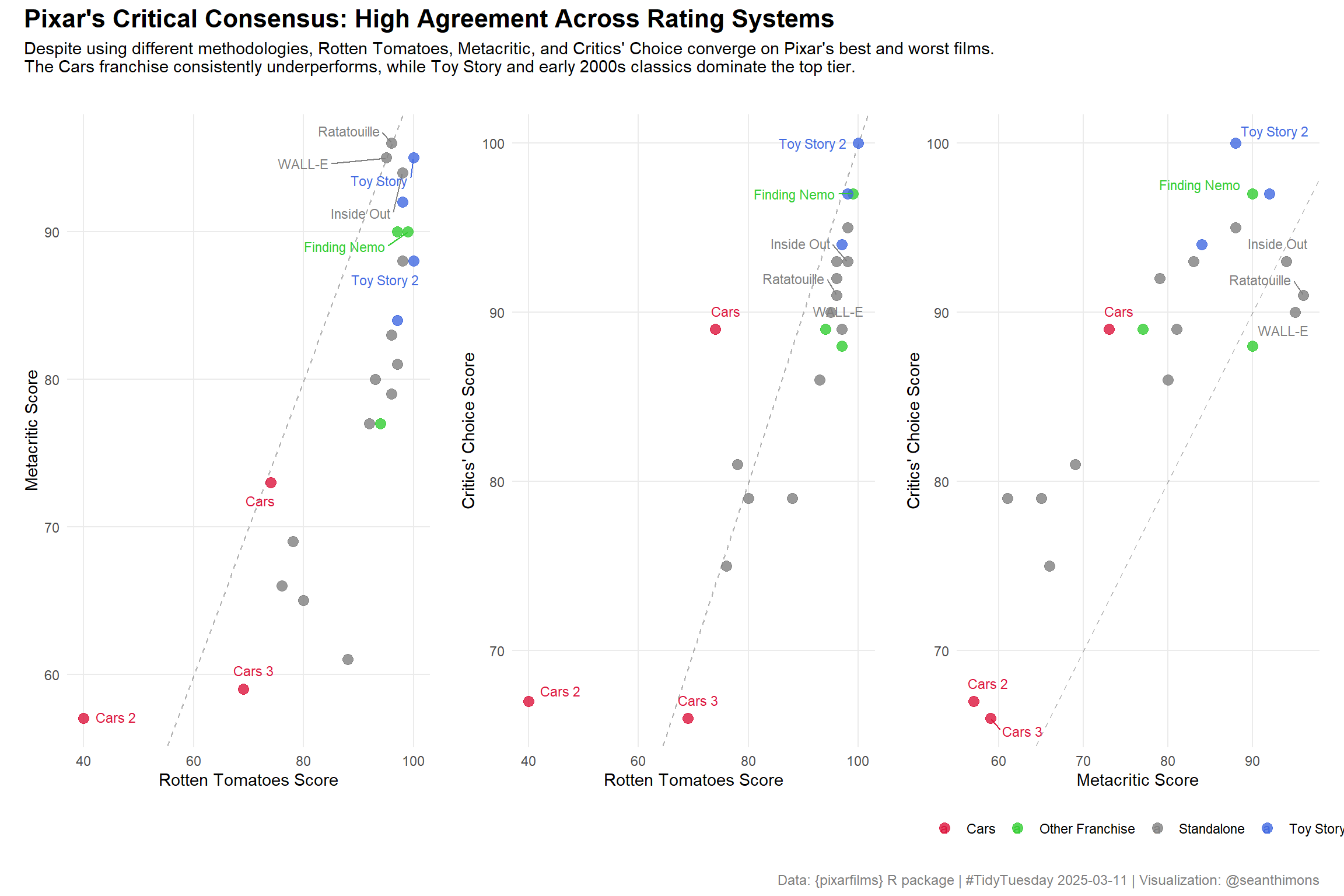

title = "Pixar's Critical Consensus: High Agreement Across Rating Systems",

subtitle = "Despite using different methodologies, Rotten Tomatoes, Metacritic, and Critics' Choice converge on Pixar's best and worst films.\nThe Cars franchise consistently underperforms, while Toy Story and early 2000s classics dominate the top tier.",

caption = "Data: {pixarfilms} R package | #TidyTuesday 2025-03-11 | Visualization: @seanthimons",

theme = theme(

plot.title = element_text(size = 16, face = "bold", hjust = 0),

plot.subtitle = element_text(size = 11, hjust = 0, margin = margin(b = 15)),

plot.caption = element_text(size = 9, hjust = 1, color = "gray50")

)

)

combined_plot